Nearly 40% of construction disputes in England and Wales involve party wall matters — and as AI tools increasingly enter the surveyor's toolkit, the stakes for getting AI governance right have never been higher. On 9 March 2026, the Royal Institution of Chartered Surveyors (RICS) made its landmark Responsible Use of Artificial Intelligence in Surveying Practice standard mandatory for all members and regulated firms worldwide [1]. For party wall practitioners, this creates both opportunity and obligation: AI can sharpen structural risk modeling and streamline award drafting, but only when deployed with rigorous bias detection and transparent oversight. This article unpacks how Responsible AI in Party Wall Surveys: RICS March 2026 Standards for Bias Detection and Ethical Award Drafting applies in practice — and what surveyors must do to stay compliant.

Key Takeaways 📌

- The RICS mandatory AI standard took effect 9 March 2026, covering all surveying disciplines including party wall practice [1].

- Firms must maintain a risk register reviewed at least quarterly, documenting AI use, potential biases, and alternative approaches [2][4].

- Surveyors cannot rely on AI outputs at face value — professional skepticism and personal accountability remain non-negotiable [1][3].

- Clients must be informed in writing about how AI is used in their case, with options to opt out [1].

- Bias in AI-generated structural risk models or award drafts can invalidate professional advice and expose firms to liability.

What the RICS March 2026 AI Standard Actually Requires

The RICS Responsible Use of Artificial Intelligence in Surveying Practice professional standard was first introduced in September 2025 before becoming mandatory on 9 March 2026 [3]. It applies globally to every RICS member and regulated firm, across valuation, construction, infrastructure, and land services [1]. Party wall surveyors are firmly within scope.

The standard is not a suggestion. It is a mandatory professional obligation, and non-compliance carries the same disciplinary weight as any other breach of RICS conduct rules.

Core Obligations at a Glance

| Obligation | Detail |

|---|---|

| Risk Register | Must be created, maintained, and reviewed at least quarterly [2][4] |

| Written Records | Document AI system used, risks, benefits, and alternatives before deployment [4] |

| Material Impact Assessment | Determine in writing whether AI had a material impact on service delivery [2] |

| Client Notification | Inform clients in writing of AI use, including opt-out options [1] |

| Professional Accountability | Surveyors remain fully accountable for all outputs — no hiding behind the algorithm [1][3] |

| Audit Trail | Clients have a right to understand how advice was generated [3] |

💬 "Clients have a right to understand how the advice they are receiving has been generated." — RICS, 2025 [3]

For party wall surveyors, these obligations translate into specific workflow changes. Every time an AI tool is used — whether for structural crack analysis, schedule of condition drafting, or award clause generation — the above framework must be applied.

Applying Responsible AI in Party Wall Surveys: RICS March 2026 Standards for Bias Detection and Ethical Award Drafting

Understanding AI Tools in Party Wall Practice

AI is entering party wall work through several channels:

- 🏗️ Structural risk modeling — algorithms that assess crack propagation, foundation movement, and load-bearing wall stress based on input data

- 📋 Schedule of condition automation — AI-assisted photo analysis and condition grading

- 📝 Award drafting tools — natural language generation systems that produce draft party wall awards based on project parameters

- 📊 Dispute risk scoring — predictive tools that flag likely areas of neighbour disagreement

Each of these carries distinct bias and failure risks that the RICS standard directly addresses.

Understanding what a party wall surveyor does and when you need one is the essential starting point before layering AI tools into the process. The surveyor's professional judgment remains the backbone of any compliant engagement.

Bias Detection: Where AI Can Go Wrong in Party Wall Contexts

The RICS standard explicitly identifies bias outputs resulting from poor data sets as a key risk [3]. In party wall practice, this is not abstract — it has real consequences for building owners and adjoining owners alike.

Common sources of AI bias in party wall surveys include:

-

Training data skewed toward certain property types — An AI model trained predominantly on Victorian terraced houses may produce unreliable structural risk assessments for post-war semi-detached properties or modern timber-frame construction.

-

Geographic data gaps — Models trained on London property data may misread risk levels in regional markets with different soil conditions, build quality, or construction eras.

-

Historical underreporting bias — If past schedules of condition systematically under-recorded damage in certain property categories, AI trained on that data will replicate those blind spots.

-

Confirmation bias amplification — AI tools can entrench the assumptions of whoever configured them, producing awards that systematically favour one party's framing of a dispute.

The RICS standard requires firms to assess stakeholder impact and bias risk as part of their pre-deployment evaluation [3]. For party wall work, this means surveyors must actively interrogate AI outputs rather than accepting them as neutral.

⚠️ Key principle: AI is only as unbiased as the data it was trained on. A surveyor who accepts a biased AI output without scrutiny is professionally responsible for that bias.

Practical Bias Detection Steps for Party Wall Surveyors

Before deploying any AI tool in a party wall instruction, surveyors should ask:

- What data was this model trained on? Is it representative of the property type and location in this case?

- Has the vendor disclosed known limitations or failure modes?

- Does the AI output align with my on-site observations? If not, why not?

- Would a different tool produce a materially different result?

- Have I documented my assessment of the AI's reliability for this specific task? [4]

This last point is critical. The RICS standard requires firms to record in writing the identifiable application of the AI system, potential risks and benefits, and alternative approaches considered before deployment [4]. This is not optional paperwork — it is the foundation of a defensible audit trail.

For complex projects such as basement excavations — where structural risk modeling is particularly high-stakes — the need for rigorous AI oversight is even more acute. Surveyors handling basement works and party wall requirements should treat AI-generated risk outputs as a starting point for professional analysis, never as a conclusion.

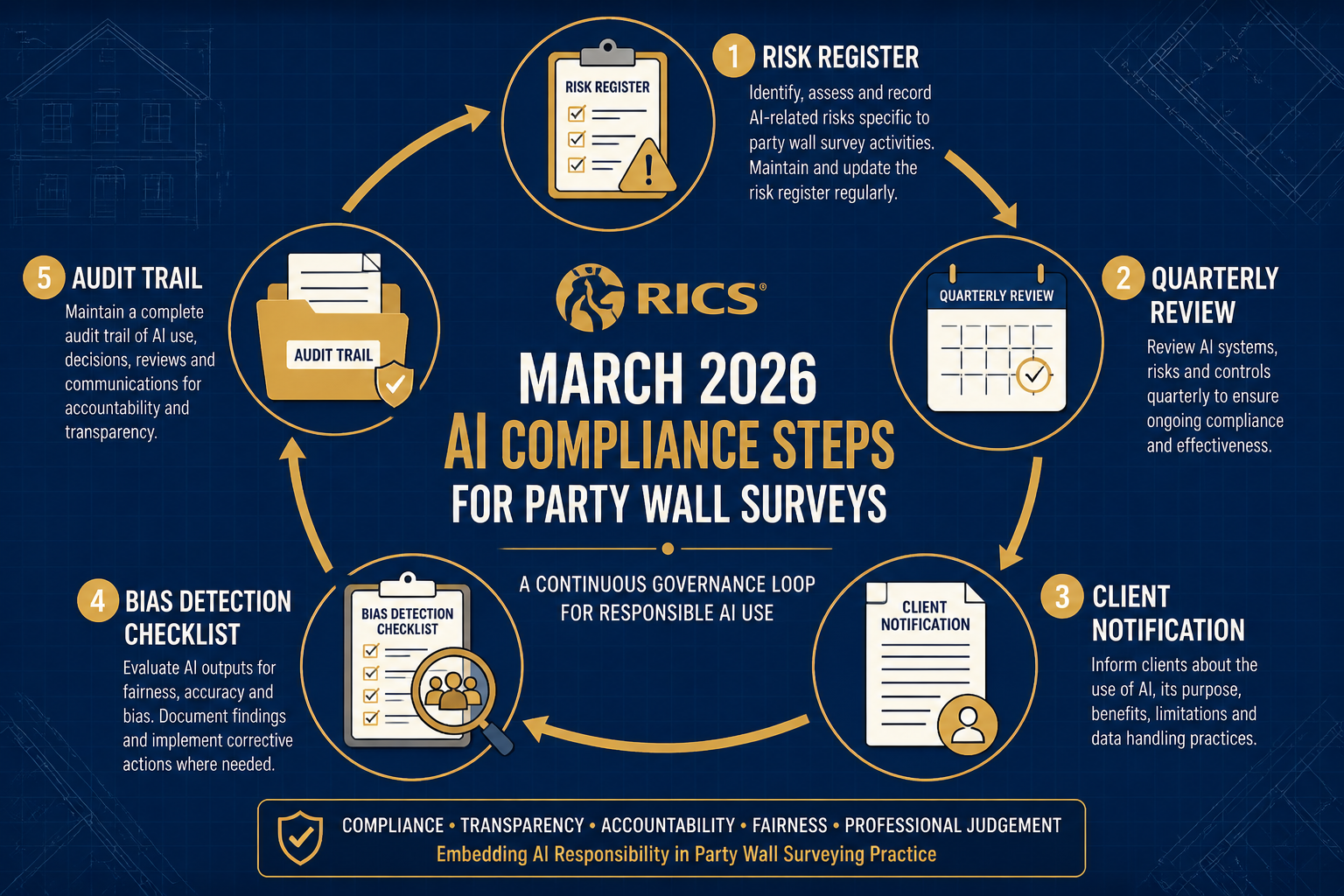

Risk Registers, Governance, and the Quarterly Review Cycle

Building a Compliant AI Risk Register

The RICS standard is explicit: firms must develop and implement responsible AI use policies informed by a risk register, and that register must be reviewed and updated at least quarterly [2][4]. For party wall practices, this is a new operational requirement that demands structured attention.

A compliant risk register for AI use in party wall surveying should cover:

| Risk Category | Example Entry | Mitigation |

|---|---|---|

| Data bias | AI trained on incomplete regional datasets | Cross-check with manual assessment; document limitations |

| Confidentiality | Client data uploaded to third-party AI platforms | Use data processing agreements; anonymise inputs where possible |

| Inaccurate outputs | AI misclassifies crack severity in schedule of condition | Mandatory surveyor review before any AI output is used |

| Award validity | AI-drafted award clause contains legally ambiguous language | Legal review of all AI-generated award text |

| Over-reliance | Junior surveyors accepting AI outputs without scrutiny | Training protocols; senior sign-off requirements |

The standard recognises that loss of confidential client data and inaccurate recommendations are among the most significant associated risks [3]. In party wall practice, confidentiality is paramount — details of a neighbour's property condition, structural vulnerabilities, and dispute history are sensitive by nature.

Environmental and Stakeholder Impact Assessment

The RICS standard requires firms to consider environmental impact and stakeholder impact when assessing AI suitability [3]. For party wall surveyors, stakeholder impact is particularly relevant: the adjoining owner, the building owner, and any third parties affected by the works all have legitimate interests in how AI shapes the advice they receive.

Surveyors must assess whether AI use could disadvantage any party — for example, by producing a schedule of condition that systematically underestimates pre-existing damage, or an award that fails to adequately protect the adjoining owner's rights.

Understanding the consequences of ignoring the Party Wall Act underscores why accuracy in party wall documentation is non-negotiable. AI-generated errors in this context can have serious legal and financial consequences for all parties.

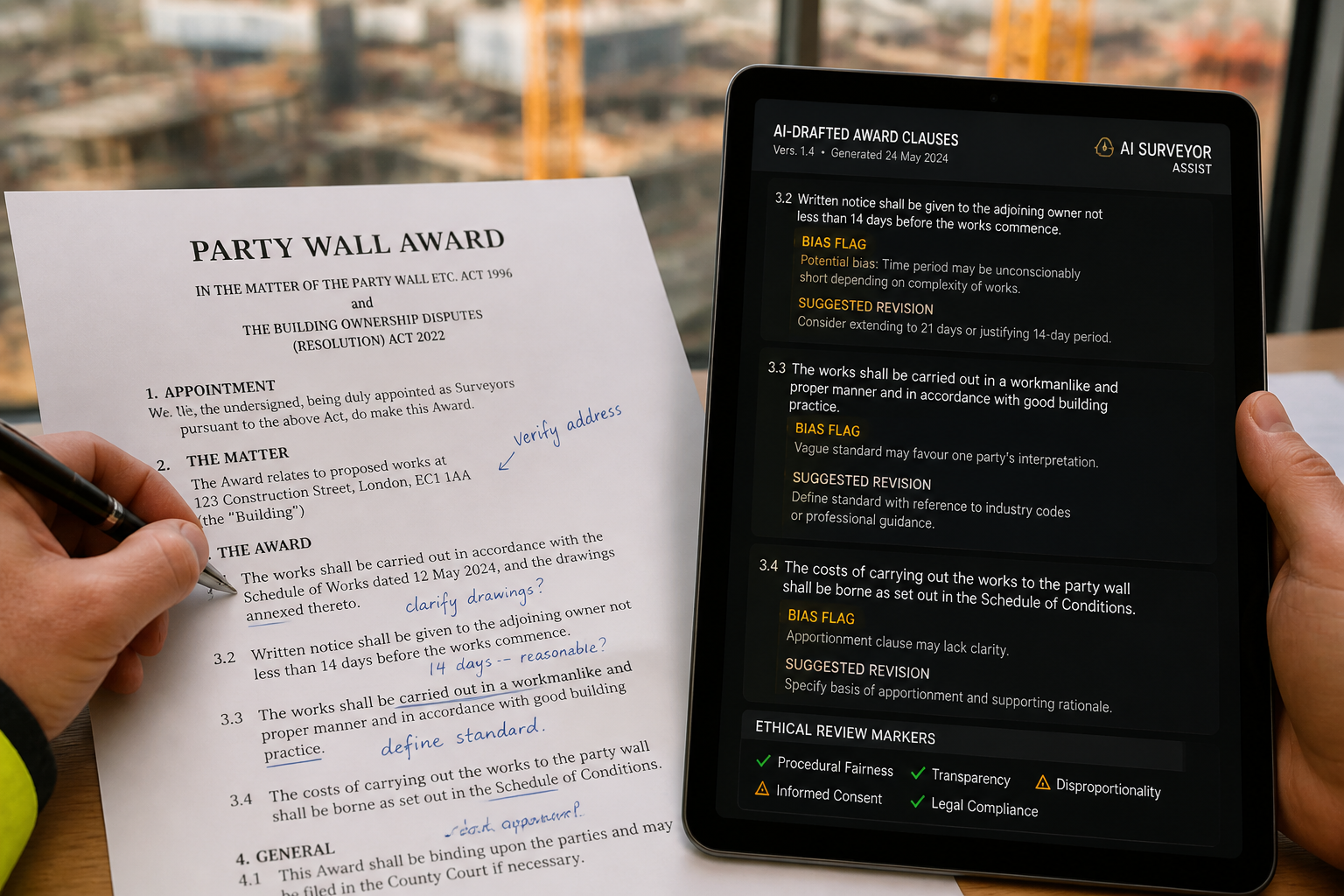

Ethical Award Drafting: Keeping AI in Its Proper Lane

The Role of AI in Drafting Party Wall Awards

AI natural language generation tools can produce draft party wall award text quickly and consistently. This has genuine efficiency benefits — particularly for straightforward cases with standard working method clauses. However, the RICS standard makes clear that surveyors must assess the reliability of AI outputs and remain accountable for all work [1][3].

In award drafting, this means:

- ✅ AI can generate a first draft of standard clauses

- ✅ AI can flag missing provisions based on project parameters

- ✅ AI can assist with consistency checking across multiple awards

- ❌ AI cannot replace the surveyor's judgment on dispute resolution

- ❌ AI cannot be cited as the author of a legally binding award

- ❌ AI outputs cannot be used without a documented professional review

The party wall agreement essentials every owner must know remain grounded in human professional judgment — AI is a drafting aid, not a decision-maker.

Client Transparency and the Right to Opt Out

One of the most operationally significant requirements of the RICS standard is the mandatory client notification obligation. Firms must inform clients in writing of when and how AI will be used in service delivery, including options for redress or opting out [1].

For party wall practices, this means:

- Engagement letters must disclose AI tool use where it is anticipated

- Mid-instruction notifications are required if AI use is introduced after engagement

- Opt-out mechanisms must be genuine — clients who decline AI use must receive a service that does not depend on it

- Audit trail documentation must be available to clients on request [3]

This transparency requirement extends to both the building owner and the adjoining owner in a party wall matter. Where an agreed surveyor is appointed — as explored in the complete guide to agreed surveyor roles and appointment — the single surveyor's AI use must be disclosed to both parties.

Documenting Material Impact: The Written Determination Requirement

The RICS standard requires firms to determine whether AI use has had a material impact on the delivery of surveying services and to make a written record of that determination with reasoning [2]. This is a nuanced but important obligation.

In party wall practice, material impact might include:

- An AI structural risk model that changed the recommended working method

- An AI schedule of condition tool that identified damage the surveyor might otherwise have missed

- An AI drafting tool that produced award clauses materially different from what the surveyor would have written independently

Where material impact is found, the written record must explain how it influenced the final advice. Where no material impact is found, that too must be documented — along with the reasoning.

This documentation discipline protects surveyors in dispute situations. If a party wall award is later challenged, a clear audit trail of AI use, bias assessment, and professional review is a significant asset.

For surveyors managing complex or contentious cases — including situations where a neighbour refuses party wall works — thorough documentation of every professional decision, including AI-assisted ones, is essential protection.

Responsible AI in Party Wall Surveys: RICS March 2026 Standards for Bias Detection and Ethical Award Drafting — Implementation Roadmap

A Practical Six-Step Implementation Plan

For party wall surveying firms looking to achieve compliance with the RICS March 2026 AI standard, the following roadmap provides a structured starting point:

Step 1: Audit Current AI Tool Use 🔍

Identify every AI tool currently used in party wall practice — including tools embedded in software platforms that surveyors may not immediately recognise as "AI."

Step 2: Build the Risk Register 📋

Create a documented risk register covering all identified AI tools, with entries for data bias, confidentiality, output accuracy, and stakeholder impact. Schedule quarterly reviews.

Step 3: Update Engagement Letters 📄

Revise standard engagement documentation to include AI disclosure language and opt-out provisions. Ensure this covers both building owner and adjoining owner communications.

Step 4: Establish Review Protocols ✅

Define mandatory human review requirements for every AI output used in party wall work — structural risk models, schedules of condition, and award drafts alike.

Step 5: Train the Team 🎓

Ensure all surveyors — including junior staff — understand that AI outputs require professional scrutiny. The RICS standard places accountability with the individual professional, not the technology [1][3].

Step 6: Document Everything 🗂️

For each instruction where AI is used, maintain a written record of: the tool used, the task it performed, the bias/reliability assessment, the material impact determination, and the professional review outcome.

A well-executed party wall schedule of condition is one of the most important documents in any party wall matter. When AI assists in producing it, that assistance must be documented, reviewed, and disclosed — without exception.

Conclusion: Professional Judgment Remains the Non-Negotiable Core

The RICS March 2026 AI standard does not prohibit AI use in party wall surveying — far from it. It creates a framework within which AI can be used responsibly, transparently, and accountably. For firms that embrace this framework, AI tools offer genuine efficiency gains in structural risk modeling, schedule of condition documentation, and award drafting.

But the standard is unambiguous on one point: surveyors remain fully accountable for every piece of advice they deliver, regardless of whether AI assisted in producing it [1][3]. The algorithm cannot be blamed. The risk register cannot be cited as a defence. Only rigorous professional judgment, applied at every stage of the AI workflow, satisfies the standard.

Actionable Next Steps for Party Wall Practitioners in 2026:

- Review your current AI tool inventory — identify every tool in use and assess it against the RICS risk criteria

- Create or update your firm's AI risk register — ensure quarterly review dates are diarised

- Revise client engagement documentation — add AI disclosure and opt-out language immediately

- Establish a written review protocol — no AI output goes into a party wall award without documented professional scrutiny

- Stay current with RICS guidance — the standard will evolve as AI technology develops; monitor RICS updates actively

The integration of AI into party wall practice is not a future possibility — it is a 2026 reality. The firms that will thrive are those that treat the RICS AI standard not as a compliance burden, but as a professional quality framework that strengthens the reliability and credibility of their work.

References

[1] RICS Launches Landmark Global Standard on Responsible Use of AI in Surveying – https://www.rics.org/news-insights/rics-launches-landmark-global-standard-on-responsible-use-of-ai-in-surveying

[2] AI Responsible Use Standard – https://ww3.rics.org/uk/en/journals/construction-journal/ai-responsible-use-standard.html

[3] RICS Responsible Use of AI Explained for APC Candidates – https://resources.apcguide.com/rics-responsible-use-of-ai-explained-for-apc-candidates/

[4] Responsible Use of Artificial Intelligence in Surveying Practice September 2025 – https://www.rics.org/content/dam/ricsglobal/documents/standards/Responsible-use-of-artificial-intelligence-in-surveying-practice_September-2025.pdf