The surveying profession stands at a technological crossroads in 2026. As artificial intelligence transforms how building surveyors assess properties, valuers determine market values, and expert witnesses prepare reports, the Royal Institution of Chartered Surveyors (RICS) has introduced a landmark regulatory framework. The RICS AI Responsible Use Standard, which took effect in March 2026, establishes mandatory compliance requirements for all RICS members and regulated firms using AI systems in their surveying practice.[1]

This comprehensive standard addresses the growing integration of AI across valuation, construction, infrastructure, and land services—sectors where accuracy, accountability, and professional judgment remain paramount.[2] For surveyors navigating this new regulatory landscape, understanding the RICS AI Responsible Use Standard: What Surveyors Need to Know About Complying with March 2026 Guidelines is no longer optional—it's essential for maintaining professional standing and delivering compliant services.

Key Takeaways

✅ Mandatory Compliance: All RICS members and regulated firms must comply with the standard when using AI systems that materially impact surveying service delivery[1]

📋 Documentation Requirements: Firms must maintain written records including material impact assessments, risk registers reviewed quarterly, and system governance evaluations[4]

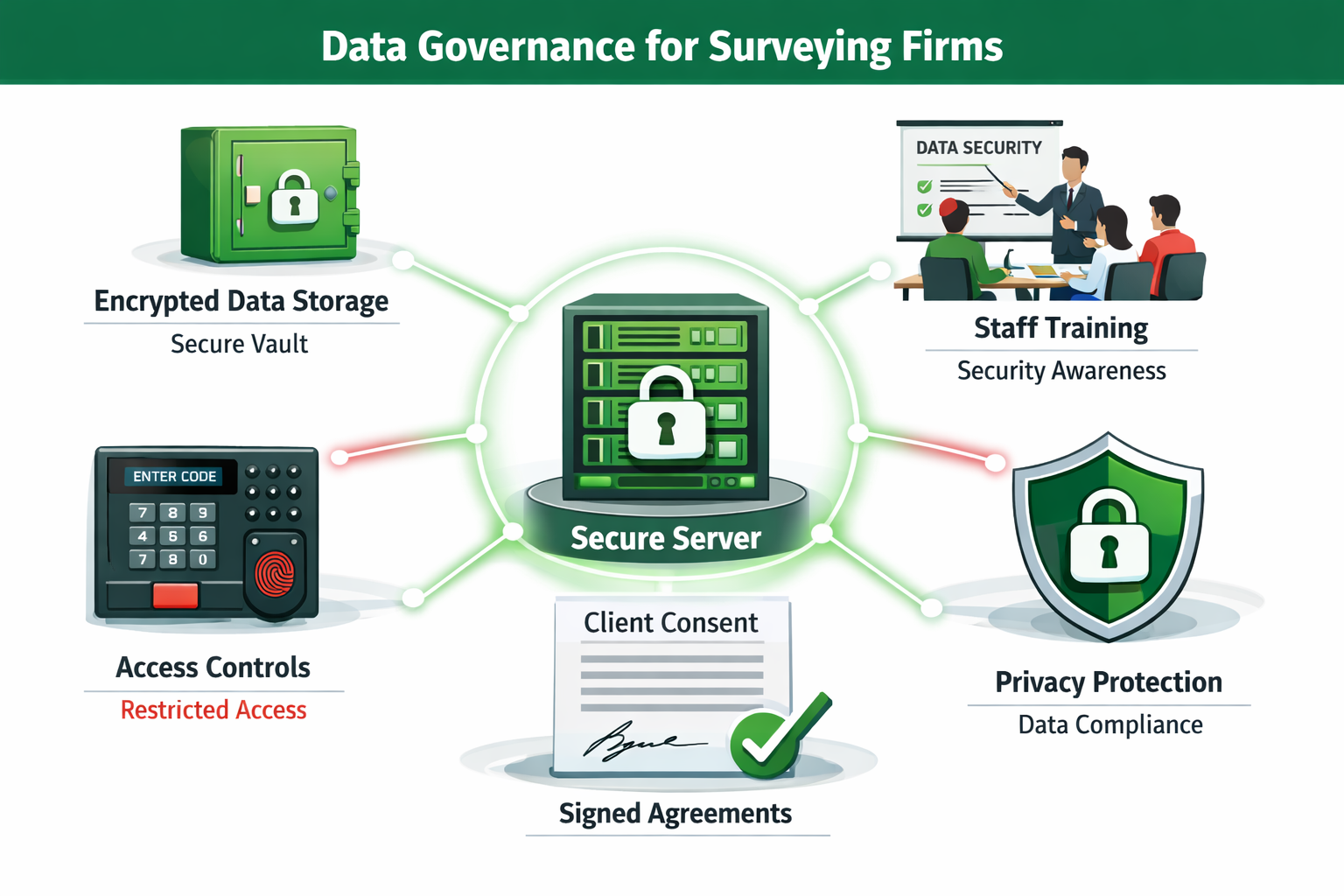

🔒 Data Protection Obligations: Strict data governance requirements mandate secure storage, restricted access, staff training, and written consent before uploading sensitive information to AI systems[1]

💬 Client Transparency: Written client communication is mandatory, informing clients when and how AI will be used, with options for redress or opting out[2]

⚖️ Professional Accountability: Surveyors remain fully accountable for all work outputs, requiring professional skepticism and expert judgment when assessing AI-generated results[2]

Understanding the RICS AI Responsible Use Standard Framework

The RICS AI Responsible Use Standard represents the first comprehensive regulatory framework specifically designed for AI use in surveying practice. Unlike general data protection regulations, this standard addresses the unique professional obligations surveyors face when integrating AI into their workflows.

Who Must Comply?

The standard applies universally to:

- RICS members working in any surveying discipline

- Regulated firms under RICS oversight

- All jurisdictions where RICS members practice, alongside applicable local legislation[1]

This broad applicability ensures consistent professional standards across building surveys, property valuations, expert witness work, and construction consultancy. Whether conducting a Level 3 full building survey or preparing insurance valuations, surveyors using AI tools must demonstrate compliance.

Core Principles of Responsible AI Use

The standard establishes five foundational principles:

- Transparency 🔍 – Clear disclosure of AI use to clients and stakeholders

- Accountability ⚖️ – Professional responsibility for all AI-assisted outputs

- Fairness 🤝 – Mitigation of bias and discriminatory outcomes

- Security 🔒 – Robust data protection and privacy safeguards

- Quality Assurance ✓ – Systematic validation of AI system outputs

These principles underpin every requirement within the standard, creating a framework that balances technological innovation with professional integrity.

Material Impact Assessment: The Critical First Step for RICS AI Responsible Use Standard Compliance

Before implementing any AI system, firms must determine whether its use constitutes a "material impact" on surveying service delivery. This assessment forms the cornerstone of compliance with the RICS AI Responsible Use Standard: What Surveyors Need to Know About Complying with March 2026 Guidelines.

Defining Material Impact

Material impact occurs when AI systems:

- Influence professional judgments in property assessments or valuations

- Generate content included in client-facing reports or documentation

- Process sensitive data related to property ownership, condition, or value

- Automate decisions that would traditionally require surveyor expertise

- Affect service quality or the reliability of professional outputs

For example, using AI to analyze building defects identified during a building survey would typically constitute material impact, whereas using AI for basic administrative scheduling might not.

Documentation Requirements

When material impact is established, firms must create and maintain written records documenting:[1]

| Documentation Element | Description | Review Frequency |

|---|---|---|

| Impact Determination | Formal assessment explaining why AI use is material | At implementation |

| Reasoning Statement | Detailed justification for the determination | At implementation |

| System Description | Technical specifications and capabilities | Annually |

| Use Case Documentation | Specific applications within surveying practice | Per project |

| Risk Assessment | Identified risks and mitigation strategies | Quarterly |

This documentation serves multiple purposes: demonstrating due diligence, supporting professional indemnity insurance claims, and providing evidence of compliance during RICS audits or client inquiries.

Practical Assessment Framework

Surveyors should apply this decision tree when evaluating material impact:

Question 1: Does the AI system process client data or property information?

- If NO → Likely not material impact

- If YES → Proceed to Question 2

Question 2: Do AI outputs directly influence professional recommendations?

- If NO → May not be material impact (document reasoning)

- If YES → Proceed to Question 3

Question 3: Would clients reasonably expect disclosure of this AI use?

- If YES → Definitely material impact

- If NO → Document why disclosure is unnecessary

This systematic approach ensures consistent determinations across different surveying contexts, from property snagging surveys to complex valuation assignments.

Mandatory Risk Management and Governance Requirements

Once material impact is established, the RICS AI Responsible Use Standard: What Surveyors Need to Know About Complying with March 2026 Guidelines mandates comprehensive risk management protocols.

Risk Register Requirements

Every firm using AI in materially impactful ways must establish and maintain a written risk register that:[4]

- Identifies specific risks associated with each AI system

- Assesses likelihood and severity of potential failures

- Documents mitigation strategies and controls

- Assigns responsibility for risk monitoring

- Reviews findings at least quarterly

The quarterly review requirement ensures firms remain responsive to evolving AI capabilities, emerging vulnerabilities, and lessons learned from operational experience.

Sample Risk Register Categories

Technical Risks ⚙️

- Algorithm accuracy and reliability

- Data quality and completeness

- System integration failures

- Output validation challenges

Professional Risks 📊

- Misinterpretation of AI recommendations

- Over-reliance on automated assessments

- Insufficient professional skepticism

- Competence gaps in AI literacy

Regulatory Risks 📋

- Non-compliance with RICS standards

- Data protection violations

- Client consent inadequacies

- Documentation deficiencies

Reputational Risks 🏢

- Client dissatisfaction with AI use

- Professional negligence claims

- Market perception of quality

- Competitive disadvantage

System Governance Assessment

Before deploying any AI system, firms must conduct and record comprehensive system governance assessments covering:[1][2]

-

Procurement Due Diligence

- Vendor reputation and track record

- System development methodology

- Quality assurance processes

- Support and maintenance commitments

-

Technical Evaluation

- Algorithm transparency and explainability

- Training data quality and bias assessment

- Performance benchmarks and validation testing

- Integration compatibility with existing systems

-

Legal Compliance Review

- Data protection regulation adherence

- Intellectual property considerations

- Contractual liability provisions

- Insurance coverage implications

-

Sustainability Impact

- Environmental footprint of AI processing

- Long-term viability of the technology

- Vendor sustainability commitments

- Alternative solution comparisons

This thorough assessment process mirrors the professional diligence surveyors apply when conducting building defects surveys—systematic, documented, and defensible.

Responsible AI Use Policies

All RICS-regulated firms must develop and implement responsible AI use policies informed by their risk registers.[1] These policies should address:

- Acceptable use cases for AI within surveying practice

- Prohibited applications where AI is deemed inappropriate

- Training requirements for staff using AI systems

- Quality assurance protocols including output validation

- Client communication standards for AI disclosure

- Incident response procedures when AI systems fail or produce questionable results

- Continuous improvement mechanisms based on operational feedback

Effective policies balance innovation with professional standards, enabling surveyors to leverage AI benefits while maintaining the integrity that clients expect when commissioning professional building surveys.

Data Governance and Privacy Protection Under the RICS AI Standard

Data governance represents one of the most stringent aspects of the RICS AI Responsible Use Standard: What Surveyors Need to Know About Complying with March 2026 Guidelines. Given the sensitive nature of property information, ownership details, and structural assessments, the standard establishes comprehensive data protection requirements.

Core Data Protection Obligations

Firms must implement multiple layers of data safeguards:[1]

Secure Storage 🔐

- Encrypted databases for all property and client information

- Access controls limiting data availability to authorized personnel only

- Regular security audits and vulnerability assessments

- Backup and disaster recovery protocols

Access Restrictions 👥

- Role-based permissions tied to job functions

- Multi-factor authentication for system access

- Audit trails tracking who accessed what data and when

- Immediate revocation of access for departing staff

Staff Training 📚

- Regular data protection training for all personnel

- AI-specific privacy awareness programs

- Incident reporting procedures

- Confidentiality agreement renewals

Privacy Protection 🛡️

- Data minimization principles (collect only necessary information)

- Anonymization techniques where feasible

- Clear data retention and deletion policies

- Privacy impact assessments for new AI implementations

Consent Requirements for AI Data Processing

The standard establishes a critical prohibition: firms must refrain from uploading sensitive data to AI systems without express written consent from affected stakeholders.[1]

This requirement has significant practical implications:

- Property owner consent before uploading structural reports to AI analysis tools

- Client authorization before processing valuation data through AI systems

- Third-party permissions when reports involve multiple stakeholders

- Documented consent trails maintained in client files

For surveyors accustomed to traditional workflows, this represents a fundamental shift. Before uploading photos from a roofing inspection to an AI defect detection system, explicit written consent must be obtained and documented.

Special Considerations for Cloud-Based AI

Many AI tools operate through cloud-based platforms, raising additional data governance concerns:

- Data sovereignty – Where is data physically stored and processed?

- Vendor access – Can the AI provider access uploaded property information?

- Data retention – How long does the vendor retain data after processing?

- Third-party sharing – Might data be used to train AI models or shared with others?

Surveyors must thoroughly investigate these questions during procurement and clearly communicate any data handling practices to clients.

Client Communication and Transparency Requirements

Transparency forms a cornerstone of the RICS AI Responsible Use Standard: What Surveyors Need to Know About Complying with March 2026 Guidelines. The standard recognizes that clients have a fundamental right to understand how AI influences the professional services they receive.

Mandatory Written Disclosure

Firms must inform clients in writing of:[2]

- When AI will be used in service delivery

- How AI will be applied to their specific project

- What AI systems will be employed

- Options for redress if clients have concerns

- Ability to opt out of AI-assisted services

This disclosure should occur before work commences, typically within engagement letters or terms of business. For surveyors conducting Level 2 or Level 3 surveys, this means updating standard documentation to include AI use disclosures.

Sample Disclosure Language

"In conducting your building survey, we may utilize artificial intelligence systems to assist with defect identification, risk assessment, and report preparation. Specifically, we use [AI System Name] to analyze building photographs and identify potential structural issues. All AI-generated insights are reviewed and validated by our qualified surveyors before inclusion in your report. You have the right to request a detailed explanation of our AI use and may opt out of AI-assisted services if preferred."

Explanation Rights

Beyond initial disclosure, firms must be able to provide written explanations of their AI use to clients upon request.[4] This requirement ensures ongoing transparency and accountability.

Surveyors should prepare to answer questions such as:

- How accurate is the AI system you're using?

- What training data was used to develop the AI?

- How do you validate AI-generated findings?

- What happens if the AI makes an error?

- Who is ultimately responsible for the report's accuracy?

Having standardized responses to these common questions streamlines compliance while building client confidence.

Opt-Out Provisions

The standard's opt-out requirement acknowledges that some clients may prefer traditional, entirely human-conducted services. Firms should:

- Clearly communicate opt-out procedures

- Ensure no penalty or service degradation for clients who opt out

- Document opt-out requests in client files

- Maintain capacity to deliver non-AI services when requested

This flexibility respects client autonomy while enabling firms to leverage AI where clients are comfortable with its use.

Professional Judgment and Quality Assurance in AI-Assisted Surveying

Despite AI's growing capabilities, the RICS AI Responsible Use Standard: What Surveyors Need to Know About Complying with March 2026 Guidelines emphasizes that professional responsibility cannot be delegated to algorithms.

The Professional Skepticism Requirement

Surveyors must assess the reliability of AI outputs and remain accountable for all work, applying professional skepticism and expertise throughout the process.[2]

This means:

Never blindly accepting AI recommendations ❌

- AI-identified defects must be visually verified

- AI valuations require professional judgment validation

- AI risk assessments need contextual evaluation

Always applying professional expertise ✅

- Considering factors AI may not capture (local market conditions, property history, etc.)

- Recognizing AI limitations and edge cases

- Exercising independent judgment on all conclusions

For example, if AI analysis suggests subsidence risk based on crack patterns in a building survey, the surveyor must personally inspect the cracks, consider soil conditions, review historical movement, and apply professional judgment before confirming the diagnosis—just as they would when identifying common defects in older homes.

Quality Assurance Through Sampling

The standard mandates that procurement and responsible use policies must cover quality assurance through randomized dip-sampling of AI outputs.[4]

Effective sampling protocols include:

Frequency: Minimum 10% of AI-assisted work products reviewed in detail

Randomization: Selection process prevents predictability

Documentation: Findings recorded in quality assurance logs

Corrective Action: Identified issues trigger system review or retraining

Escalation: Repeated errors prompt suspension of AI use pending resolution

This systematic approach mirrors quality assurance practices in professional surveying firms, ensuring consistent service quality regardless of technology use.

Competence and Training Requirements

Surveyors using AI systems must maintain competence in:

- AI literacy – Understanding how AI systems work and their limitations

- Critical evaluation – Assessing AI output quality and reliability

- System operation – Proper use of AI tools within their intended parameters

- Risk awareness – Recognizing when AI may be unreliable or inappropriate

Firms should implement regular training programs covering these competencies, with refresher courses as AI technologies evolve.

Special Requirements for Firms Developing AI Systems

While most surveyors use third-party AI tools, some firms develop proprietary AI systems for internal use or commercial licensing. The RICS AI Responsible Use Standard: What Surveyors Need to Know About Complying with March 2026 Guidelines establishes additional requirements for these developers.

Development-Specific Assessments

Firms developing AI systems must conduct assessments of:[2]

Data Quality 📊

- Training data representativeness and diversity

- Bias identification and mitigation strategies

- Data accuracy verification processes

- Ongoing data quality monitoring

Stakeholder Involvement 🤝

- Consultation with end-users during development

- Testing with diverse surveying scenarios

- Feedback incorporation mechanisms

- User acceptance validation

Sustainability Impact 🌍

- Environmental footprint of AI training and operation

- Energy efficiency optimization

- Long-term maintenance sustainability

- Lifecycle environmental assessment

Legal Compliance ⚖️

- Intellectual property clearances

- Data protection regulation adherence

- Professional liability considerations

- Contractual obligations to clients

These requirements ensure that surveyor-developed AI systems meet the same rigorous standards as commercial products, protecting both the developing firm and end-users.

Validation and Testing Protocols

Developer firms must implement comprehensive validation protocols before deployment:

- Accuracy testing against known ground truth datasets

- Edge case evaluation with unusual or challenging scenarios

- Bias assessment across different property types and locations

- Performance benchmarking against human expert judgments

- Failure mode analysis identifying potential error conditions

Documentation of these validation efforts becomes part of the system governance assessment required for all AI deployments.

Practical Implementation Strategies for Surveying Firms

Transitioning to compliance with the RICS AI Responsible Use Standard: What Surveyors Need to Know About Complying with March 2026 Guidelines requires systematic planning and execution.

Implementation Roadmap

Phase 1: Assessment (Weeks 1-4) 🔍

- Inventory all current AI system usage

- Conduct material impact determinations

- Identify compliance gaps

- Prioritize remediation actions

Phase 2: Documentation (Weeks 5-8) 📋

- Develop risk registers

- Create responsible use policies

- Update client engagement templates

- Establish quality assurance protocols

Phase 3: Training (Weeks 9-12) 📚

- Staff AI literacy programs

- Data protection refresher training

- System-specific operational training

- Professional skepticism workshops

Phase 4: Deployment (Weeks 13-16) 🚀

- Implement updated policies and procedures

- Launch enhanced client communication protocols

- Activate quality assurance sampling

- Begin quarterly risk register reviews

Phase 5: Monitoring (Ongoing) 📊

- Quarterly risk register reviews

- Continuous quality assurance sampling

- Annual policy reviews and updates

- Emerging technology assessments

Resource Allocation

Firms should budget for:

- Personnel time for documentation development and training

- Technology investments in secure data storage and access controls

- External expertise for legal compliance review and AI system evaluation

- Ongoing monitoring resources for quality assurance and risk management

Smaller practices may find value in collaborating with peers or industry associations to share compliance resources and best practices.

Common Implementation Challenges

Challenge: Staff resistance to new documentation requirements

Solution: Emphasize risk mitigation benefits and professional protection

Challenge: Client concerns about AI use in professional services

Solution: Transparent communication highlighting human oversight and quality benefits

Challenge: Keeping pace with rapidly evolving AI technologies

Solution: Establish technology monitoring protocols and vendor relationship management

Challenge: Balancing innovation with compliance obligations

Solution: Implement staged AI adoption with thorough assessment at each stage

Enforcement, Consequences, and Professional Obligations

Understanding the enforcement mechanisms behind the RICS AI Responsible Use Standard: What Surveyors Need to Know About Complying with March 2026 Guidelines helps firms appreciate compliance importance.

RICS Enforcement Powers

As a professional standard, non-compliance can result in:

- Professional conduct investigations initiated by RICS

- Disciplinary proceedings against individual members or firms

- Practice restrictions limiting scope of services

- Fines and penalties for serious or repeated violations

- Expulsion from RICS membership in extreme cases

These consequences carry significant professional and commercial implications, potentially affecting:

- Professional indemnity insurance coverage and premiums

- Client confidence and market reputation

- Ability to compete for certain types of work

- Legal liability in negligence claims

Professional Indemnity Insurance Considerations

Insurers increasingly scrutinize AI use in professional services. Firms should:

- Notify insurers of AI system adoption

- Verify coverage extends to AI-assisted work

- Document compliance with RICS standards to support claims defense

- Review policy exclusions related to technology use

Failure to comply with the RICS standard may provide insurers grounds to deny coverage for claims involving AI-assisted work.

Client Legal Recourse

Clients harmed by non-compliant AI use may pursue:

- Professional negligence claims based on standard of care breaches

- Breach of contract actions if engagement terms specified compliance

- Regulatory complaints to RICS triggering investigations

- Reputational damage through public disclosure of AI-related failures

Robust compliance programs protect firms from these risks while demonstrating professional commitment to quality and accountability.

Conclusion: Embracing AI Responsibly in Surveying Practice

The RICS AI Responsible Use Standard: What Surveyors Need to Know About Complying with March 2026 Guidelines represents a watershed moment for the surveying profession. Rather than restricting innovation, the standard provides a framework enabling surveyors to harness AI's transformative potential while maintaining the professional integrity clients expect.

Key Success Factors

✅ Proactive compliance rather than reactive responses to enforcement

✅ Cultural integration of responsible AI principles throughout the organization

✅ Continuous learning as AI technologies and applications evolve

✅ Client-centric transparency building trust through open communication

✅ Professional judgment primacy ensuring human expertise remains central

For surveyors conducting building surveys, preparing valuations, or providing expert witness services, the standard offers clarity on navigating AI integration responsibly.

Actionable Next Steps

Immediate Actions (This Week):

- Conduct an inventory of all AI systems currently in use

- Schedule a firm-wide meeting to discuss compliance requirements

- Review existing client engagement templates for AI disclosure gaps

- Identify a compliance champion to lead implementation efforts

Short-Term Actions (This Month):

- Complete material impact assessments for all identified AI systems

- Begin drafting your firm's risk register

- Contact your professional indemnity insurer about AI use disclosure

- Research training resources for staff AI literacy development

Medium-Term Actions (This Quarter):

- Finalize and implement responsible AI use policies

- Update all client-facing documentation with AI disclosures

- Establish quality assurance sampling protocols

- Conduct initial staff training on the standard's requirements

Long-Term Actions (Ongoing):

- Maintain quarterly risk register reviews

- Monitor AI technology developments and assess implications

- Participate in industry discussions on AI best practices

- Continuously refine policies based on operational experience

The surveying profession has always balanced tradition with innovation, maintaining professional standards while embracing tools that enhance service quality. The RICS AI Responsible Use Standard continues this tradition, ensuring that as AI transforms surveying practice, the profession's commitment to accuracy, accountability, and client service remains unwavering.

By understanding and implementing these guidelines, surveyors position themselves not just for compliance, but for competitive advantage in an increasingly technology-driven market. The firms that master responsible AI use will deliver superior insights, enhanced efficiency, and uncompromising professional quality—exactly what clients need when making critical property decisions.

References

[1] Ai Responsible Use Standard – https://ww3.rics.org/uk/en/journals/construction-journal/ai-responsible-use-standard.html

[2] Rics Launches Landmark Global Standard On Responsible Use Of Ai In Surveying – https://www.rics.org/news-insights/rics-launches-landmark-global-standard-on-responsible-use-of-ai-in-surveying

[3] Responsible Use Of Ai – https://www.rics.org/profession-standards/rics-standards-and-guidance/conduct-competence/responsible-use-of-ai

[4] Navigating The New Rics Ai Standard What It Means For Surveyors – https://www.artefact.com/blog/navigating-the-new-rics-ai-standard-what-it-means-for-surveyors/

[5] Rics Global Standard On Responsible Ai Use In Surveying Practice Now In Effect – https://www.lexisnexis.co.uk/legal/news/rics-global-standard-on-responsible-ai-use-in-surveying-practice-now-in-effect