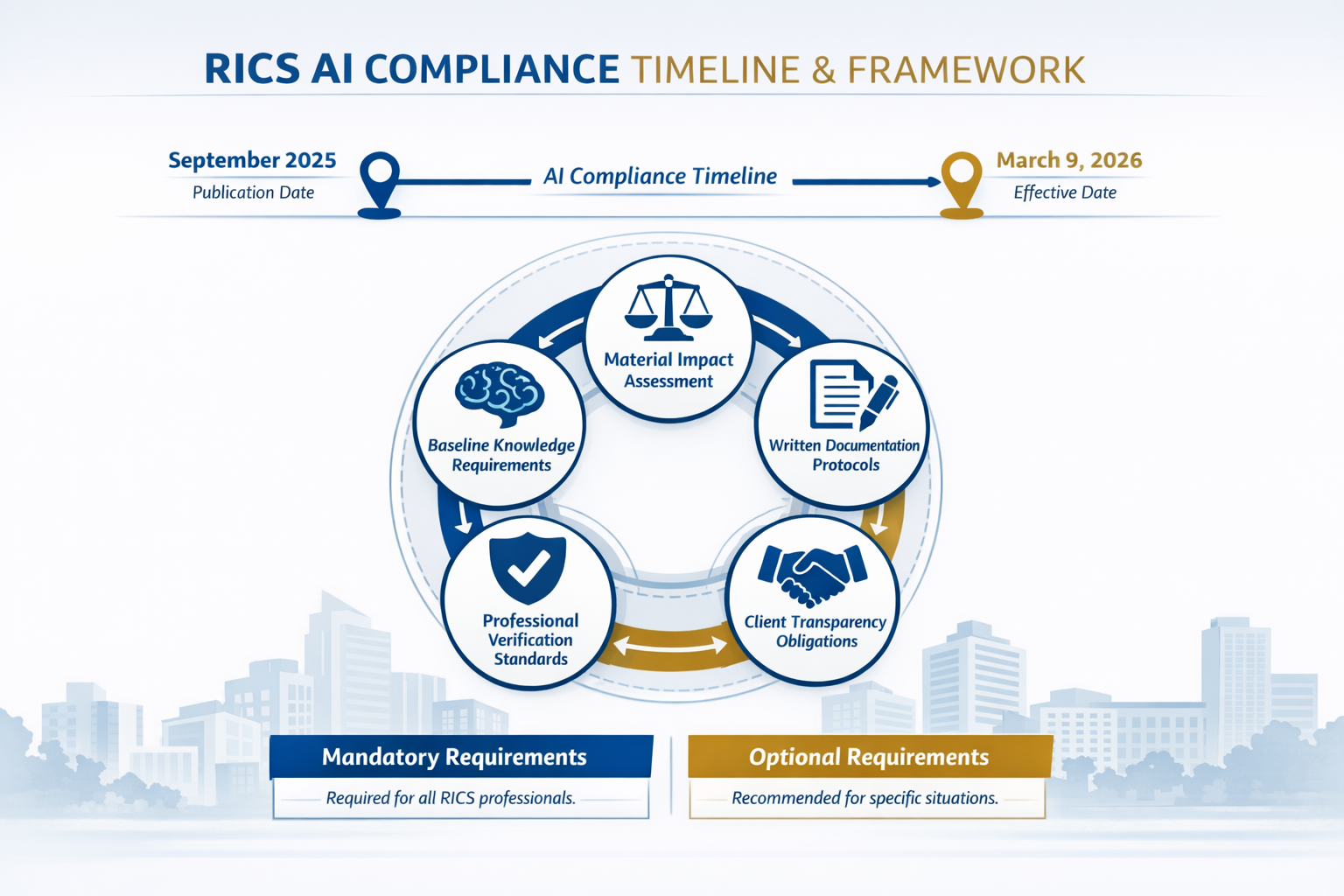

Artificial intelligence has quietly infiltrated surveying practices across the UK, yet until March 2026, no mandatory framework existed to govern its use. The RICS Professional Standard on Responsible AI Use in UK Surveying: Guidelines for Building Surveyors and Valuers in 2026 now establishes the first comprehensive regulatory framework for AI integration in property assessment, valuation, and expert witness work. Published in September 2025 and effective from 9 March 2026, this mandatory standard fundamentally reshapes how chartered surveyors must approach AI-assisted workflows while maintaining professional independence and accountability.[1]

This groundbreaking standard arrives at a critical juncture when AI tools promise enhanced accuracy in building surveys and valuations, yet carry significant risks of bias, hallucinations, and compromised professional judgment. The framework balances innovation with responsibility, ensuring that technology enhances rather than replaces the expertise that defines the surveying profession.

Key Takeaways

- ✅ Mandatory compliance became effective 9 March 2026 for all RICS members and regulated firms using AI systems with material impact on service delivery

- 📝 Written documentation is required for material impact determinations, risk registers, responsible use policies, and AI system governance assessments

- 👤 Professional judgment remains central – all AI outputs must be verified by qualified surveyors who accept responsibility for reliability decisions

- 🔍 Client transparency is mandatory – firms must disclose AI usage in writing before service delivery when material impact exists

- ⚖️ Baseline knowledge requirements cover AI types, limitations, hallucination risks, bias potential, and data usage considerations

Understanding the RICS Professional Standard on Responsible AI Use in UK Surveying: Guidelines for Building Surveyors and Valuers in 2026

What Makes This Standard Mandatory

Unlike guidance documents or best practice recommendations, the RICS Professional Standard on Responsible AI Use in UK Surveying: Guidelines for Building Surveyors and Valuers in 2026 carries mandatory compliance requirements for all RICS members and regulated firms operating in any jurisdiction.[1] This distinction is crucial: failure to comply could result in disciplinary action affecting professional standing and practice rights.

However, the standard includes an important clarification—members are not required to use AI.[1] The framework applies only when practitioners choose to integrate AI systems into their surveying workflows. This approach respects professional autonomy while establishing clear guardrails for those who adopt these technologies.

The standard received positive responses both nationally and internationally since its September 2025 publication, reflecting widespread recognition that regulatory clarity was overdue in this rapidly evolving technological landscape.[1]

Defining Material Impact in AI-Assisted Surveying

Central to the standard is the concept of material impact—whether an AI output is capable of influencing service delivery and the nature of that influence.[1] This determination falls squarely on the shoulders of individual RICS members and regulated firms, requiring professional judgment about when AI crosses the threshold from peripheral tool to substantive contributor.

Consider these practical scenarios:

Material Impact Examples:

- AI systems analyzing structural defect patterns in Level 3 building surveys that inform repair recommendations

- Machine learning algorithms processing comparable property data for valuation reports

- Computer vision tools identifying roof damage in drone survey imagery

- Predictive models estimating building lifespan for insurance reinstatement valuations

Non-Material Impact Examples:

- Basic scheduling software with AI optimization

- Email categorization and administrative automation

- Simple data entry assistance

- Generic office productivity tools

When material impact is established, the standard requires written documentation of that determination and the reasoning behind it.[1] This creates an audit trail demonstrating thoughtful consideration rather than casual adoption of AI capabilities.

Baseline Knowledge Requirements for AI Users

The RICS Professional Standard on Responsible AI Use in UK Surveying: Guidelines for Building Surveyors and Valuers in 2026 establishes foundational competency requirements for any member using AI systems. These baseline knowledge areas include:[1]

🧠 Different Types of AI and Their Workings

- Understanding distinctions between rule-based systems, machine learning, and generative AI

- Recognizing how training data shapes AI behavior and outputs

- Knowing the difference between narrow AI (task-specific) and general AI concepts

⚠️ Limitations and Failure Modes

- Identifying circumstances where AI systems produce unreliable results

- Understanding edge cases and scenarios outside training parameters

- Recognizing when AI confidence scores may be misleading

🎭 Hallucination Risks

- Awareness that AI can generate plausible but entirely fabricated information

- Understanding why generative AI may "fill gaps" with invented data

- Knowing verification protocols to detect erroneous outputs

⚖️ Bias in AI Systems

- Recognizing how training data bias translates into output bias

- Understanding demographic, geographic, and temporal bias risks

- Identifying potential discrimination in property valuations or assessments

🔒 Data Usage and Data Risks

- Comprehending data privacy implications of AI processing

- Understanding data security vulnerabilities in AI systems

- Knowing GDPR and data protection obligations when using AI tools

These requirements ensure practitioners approach AI with appropriate skepticism and understanding rather than treating these systems as infallible black boxes.

Core Compliance Requirements of the RICS Professional Standard on Responsible AI Use in UK Surveying: Guidelines for Building Surveyors and Valuers in 2026

System Governance and Pre-Use Assessment

Before deploying AI systems, the standard mandates multiple governance assessment steps that must be recorded in writing.[1][3] This proactive approach prevents hasty adoption of unsuitable or risky technologies.

Appropriate Tool Assessment

RICS-regulated firms must assess and record in writing whether AI is the most appropriate tool for the specific surveying task at hand.[2] This evaluation considers:

- The nature of surveying services being provided

- The specific task requirements and complexity

- Available alternative tools and methodologies

- Comparative advantages and disadvantages

- Resource implications and efficiency gains

For instance, when conducting a measured building survey, firms must document why AI-enhanced laser scanning processing offers advantages over traditional measurement verification methods, or conversely, why conventional approaches remain superior for particular building types or conditions.

Due Diligence Frameworks

The standard requires established frameworks for evaluating AI systems before adoption.[1][3] These frameworks should address:

| Assessment Category | Key Questions |

|---|---|

| Technical Capability | Does the system perform reliably for our specific surveying applications? |

| Training Data Quality | Is the AI trained on relevant UK property data and building types? |

| Vendor Credibility | Does the provider demonstrate responsible AI development practices? |

| Integration Requirements | Can the system integrate with existing surveying workflows and software? |

| Support and Updates | Are ongoing maintenance and improvement commitments adequate? |

| Cost-Benefit Analysis | Do efficiency gains justify implementation costs and risks? |

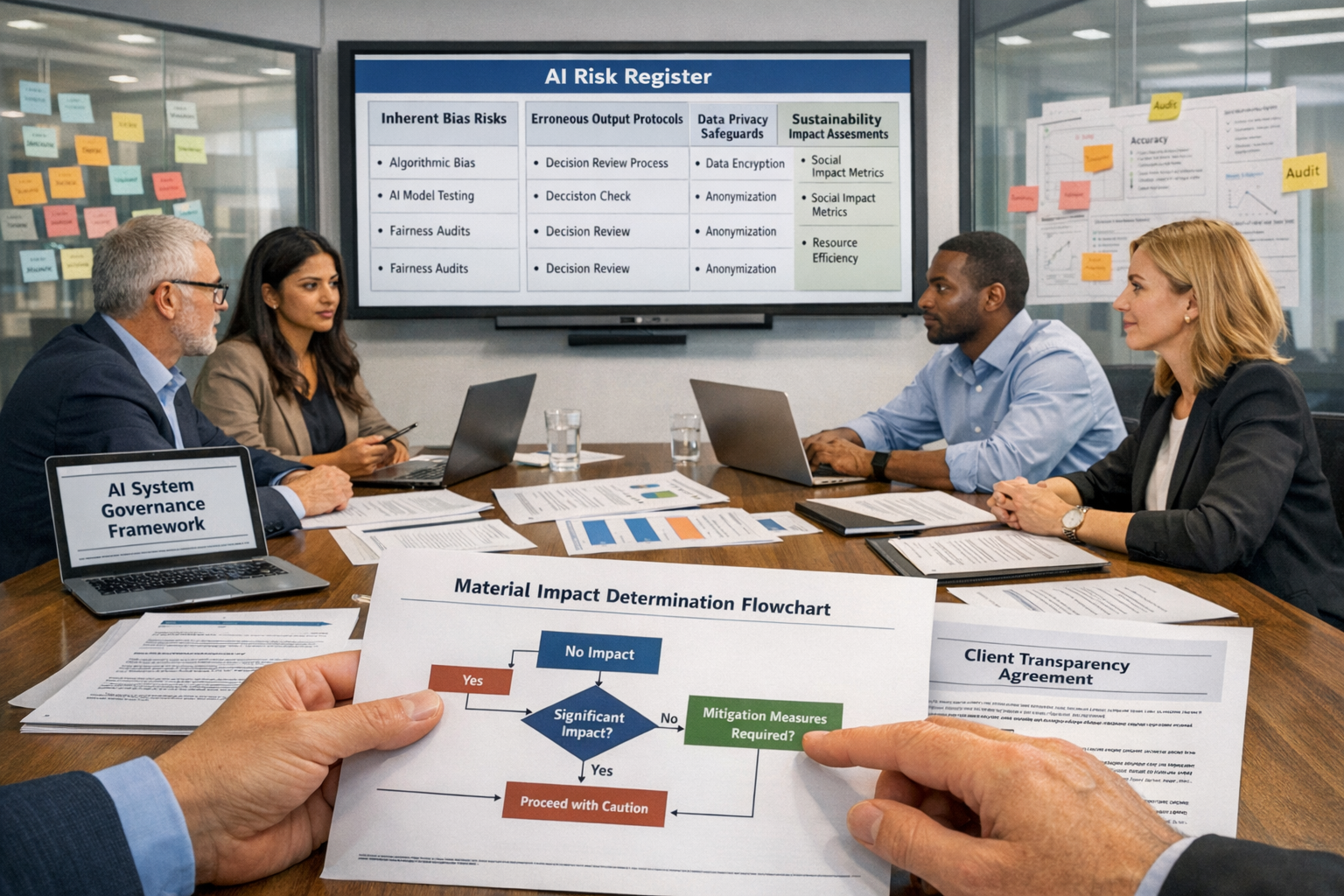

Risk Registers and Responsible Use Policies

RICS-regulated firms using or intending to use AI systems must develop and implement responsible AI use policies informed by comprehensive risk registers.[2][7][8] These documents form the foundation of organizational AI governance.

Risk Register Components

The risk register must document overarching risks including:[2]

🎯 Inherent Bias Risks

- Geographic bias (e.g., AI trained predominantly on London properties applied to regional markets)

- Property type bias (e.g., systems optimized for residential properties used for commercial assessments)

- Temporal bias (e.g., AI trained on pre-pandemic data applied to post-pandemic market conditions)

- Demographic bias (e.g., valuation algorithms that inadvertently discriminate based on neighborhood characteristics)

❌ Erroneous Output Risks

- Hallucination scenarios specific to surveying contexts

- Calculation errors in structural load assessments

- Misidentification of building materials or defects

- Incorrect comparable property selections for valuations

🔐 Data Privacy and Security Risks

- Client confidentiality breaches through cloud-based AI processing

- Unauthorized data retention by AI service providers

- Cross-contamination of client data in shared AI systems

- GDPR compliance failures in data handling

⚡ Operational Risks

- System downtime affecting service delivery timelines

- Dependency on external AI providers

- Skills gaps in AI verification and oversight

- Professional indemnity insurance implications

Responsible Use Policy Development

The responsible use policy translates risk awareness into operational protocols. Effective policies address:

- Approved AI systems and applications – which tools are authorized for which surveying tasks

- Prohibited uses – scenarios where AI must not be deployed

- Verification protocols – how AI outputs must be checked and validated

- Documentation requirements – what records must be maintained

- Training obligations – ensuring staff competency in AI oversight

- Client communication standards – transparency requirements and disclosure templates

- Incident response procedures – actions when AI errors are detected

- Regular review schedules – ensuring policies remain current as AI capabilities evolve

Professional Verification and Accountability

Perhaps the most critical aspect of the RICS Professional Standard on Responsible AI Use in UK Surveying: Guidelines for Building Surveyors and Valuers in 2026 is its unwavering emphasis on human professional judgment and accountability.

Mandatory Output Verification

The standard requires that all AI output must be verified by a qualified professional.[3] This non-negotiable requirement ensures that professional judgment cannot be replaced with generative AI information without human assessment and approval.[3]

For building surveyors conducting property inspections, this means:

- ✅ AI-identified defects must be physically verified on-site

- ✅ AI-generated repair cost estimates must be reviewed against current market rates and professional experience

- ✅ AI-suggested comparable properties for valuations must be assessed for true comparability

- ✅ AI-produced report sections must be edited for accuracy, relevance, and professional standards

Professional Judgment at the Core

The standard places professional judgment of the surveyor—encompassing knowledge, skills, experience, and professional scepticism—at the heart of any AI-assisted workflow.[4] This philosophical position recognizes that AI tools, however sophisticated, lack the contextual understanding, ethical reasoning, and professional responsibility that define chartered surveying practice.

A named qualified surveyor must accept responsibility for reliability decisions.[4] This personal accountability cannot be delegated to algorithms or diffused across technological systems. When a Level 2 survey report incorporates AI-assisted analysis, a specific RICS member must certify that the final output meets professional standards and accurately represents property conditions.

This approach preserves the fundamental principle that technology serves the professional, not the reverse.

Client Transparency and Communication Requirements

Mandatory Written Disclosure

The RICS Professional Standard on Responsible AI Use in UK Surveying: Guidelines for Building Surveyors and Valuers in 2026 establishes clear transparency obligations toward clients. When AI systems have material impact on service delivery, firms must make clear to clients in writing and in advance when and for what purpose AI will be used.[2]

This disclosure requirement serves multiple purposes:

📋 Informed Consent – clients can make educated decisions about engaging services that incorporate AI

🔍 Expectation Management – clients understand the role of technology in their survey or valuation

⚖️ Risk Awareness – clients are alerted to potential limitations or considerations

📄 Contractual Clarity – the scope and methodology of services are explicitly defined

Contractual Documentation Standards

Contractual documents must detail AI usage comprehensively.[2] Effective disclosure language includes:

Example Disclosure Statement:

"In conducting your building survey, we will utilize AI-enhanced thermal imaging analysis to identify potential insulation defects and moisture ingress patterns. This AI system processes thermal data to highlight temperature anomalies that may indicate building defects. All AI-identified concerns will be physically verified by our qualified surveyor during the site inspection. The AI system serves as a supplementary analytical tool; final conclusions and recommendations remain the professional judgment and responsibility of our chartered surveyor."

Client Information Rights

The standard requires firms to provide written information on request about AI systems used.[2] Clients may legitimately ask:

- What specific AI tools were employed in their survey or valuation?

- How was the AI output verified and validated?

- What limitations does the AI system have?

- Who is the named qualified surveyor responsible for AI-assisted work?

- How does AI use affect professional indemnity insurance coverage?

Firms should prepare standardized responses to these questions, ensuring consistent and accurate communication across all client interactions.

Sustainability Considerations in AI Development

An innovative aspect of the RICS Professional Standard on Responsible AI Use in UK Surveying: Guidelines for Building Surveyors and Valuers in 2026 addresses environmental responsibility. Members and regulated firms developing AI systems must carry out and record in writing a sustainability impact assessment of the proposed AI system.[2]

This requirement acknowledges the significant environmental footprint of AI technologies:

Environmental Impact Factors

⚡ Energy Consumption

- Training large AI models requires substantial computational power

- Ongoing inference operations consume electricity continuously

- Data center cooling and infrastructure add to energy demands

🌍 Carbon Footprint

- Geographic location of servers affects carbon intensity

- Renewable energy sourcing reduces environmental impact

- Model efficiency directly correlates with sustainability

♻️ Resource Utilization

- Hardware manufacturing and disposal considerations

- E-waste implications of specialized AI computing equipment

- Lifecycle assessment of AI infrastructure

Sustainability Assessment Framework

Firms developing proprietary AI tools for surveying applications should evaluate:

| Assessment Area | Considerations |

|---|---|

| Necessity | Is AI development justified, or are lower-impact alternatives adequate? |

| Efficiency | Can the AI system be optimized to reduce computational requirements? |

| Infrastructure | Are servers powered by renewable energy sources? |

| Lifecycle | What is the expected useful life and disposal plan for AI hardware? |

| Offsetting | Are carbon offset programs in place for unavoidable emissions? |

This forward-thinking requirement positions RICS at the forefront of sustainable technology adoption in professional services.

Practical Implementation for Building Surveyors and Valuers

AI Applications in Building Surveys

Building surveyors can leverage AI responsibly across multiple survey types while maintaining compliance with the standard:

Defect Identification and Analysis

AI Capability: Computer vision systems trained to identify common building defects from photographs

Compliance Approach:

- Document that AI serves as preliminary screening tool

- Verify all AI-identified defects through physical inspection

- Record instances where AI missed defects human inspection found

- Maintain risk register noting AI limitations with unusual building types

When conducting a full building survey, AI image analysis might flag potential damp issues, but the surveyor must physically verify moisture levels, investigate underlying causes, and assess severity through professional expertise.

Thermal Imaging Enhancement

AI Capability: Pattern recognition in thermal imagery highlighting insulation gaps and moisture ingress

Compliance Approach:

- Disclose AI-enhanced thermal analysis in client engagement letters

- Validate AI-identified thermal anomalies with additional diagnostic tools

- Apply professional judgment to distinguish between AI false positives and genuine defects

- Document verification methodology in survey reports

Structural Analysis Support

AI Capability: Predictive modeling of structural load distributions and stress points

Compliance Approach:

- Ensure AI models are trained on appropriate UK building standards and regulations

- Verify AI calculations against manual structural assessment methods

- Engage structural engineers for complex scenarios regardless of AI confidence levels

- Maintain clear documentation of AI role versus professional engineering judgment

AI Applications in Property Valuation

Valuers face particular challenges in AI integration given the subjective elements of property assessment:

Comparable Property Selection

AI Capability: Machine learning algorithms identifying comparable properties based on multiple parameters

Compliance Approach:

- Review all AI-suggested comparables for genuine comparability

- Apply professional judgment to market conditions AI may not capture

- Document reasoning when overriding or supplementing AI selections

- Maintain awareness of potential geographic or property type bias in AI training data

When preparing leasehold extension valuations, AI might suggest comparables based on statistical similarity, but the valuer must assess whether these truly reflect the specific lease terms, location nuances, and market sentiment affecting the subject property.

Market Trend Analysis

AI Capability: Predictive analytics forecasting property value trends

Compliance Approach:

- Treat AI predictions as one input among multiple market indicators

- Apply professional skepticism to AI forecasts, particularly in volatile markets

- Disclose to clients that AI predictions carry inherent uncertainty

- Document material differences between AI projections and professional judgment

Automated Valuation Models (AVMs)

AI Capability: Algorithm-driven property valuations based on statistical analysis

Compliance Approach:

- Recognize AVMs as screening tools, not substitutes for professional valuation

- Verify AVM outputs against physical property inspection findings

- Identify scenarios where AVMs are inappropriate (unique properties, rapidly changing markets, properties requiring significant adjustment)

- Maintain named surveyor accountability for final valuation figure

Common Compliance Challenges and Solutions

Challenge 1: Determining Material Impact

The Issue: Uncertainty about whether specific AI uses cross the material impact threshold

Solution Approach:

- Develop internal decision matrices with examples

- Consult with professional indemnity insurers on their perspective

- Err on the side of caution—when uncertain, treat as material impact

- Document reasoning for all determinations, creating precedent for future decisions

Challenge 2: Vendor Transparency Limitations

The Issue: AI system providers may not disclose training data, algorithms, or limitations

Solution Approach:

- Prioritize vendors with transparent AI practices

- Include contractual requirements for AI system documentation

- Conduct independent testing of AI systems on known scenarios

- Maintain heightened verification protocols when vendor transparency is limited

Challenge 3: Keeping Pace with AI Evolution

The Issue: AI capabilities evolve rapidly, potentially outpacing policy updates

Solution Approach:

- Schedule quarterly reviews of responsible use policies

- Assign specific staff responsibility for AI governance monitoring

- Participate in RICS forums and industry discussions on AI developments

- Implement agile policy frameworks that accommodate technological change

Challenge 4: Client Resistance to AI Disclosure

The Issue: Some clients may react negatively to AI use disclosure

Solution Approach:

- Frame AI as enhancement tool that improves accuracy and efficiency

- Emphasize professional verification and accountability

- Provide examples of how AI improves service quality

- Offer AI and non-AI service options where feasible

Challenge 5: Balancing Efficiency and Compliance

The Issue: Documentation requirements may seem to negate AI efficiency gains

Solution Approach:

- Develop standardized templates for common documentation needs

- Integrate compliance documentation into existing quality management systems

- Automate routine compliance tasks (e.g., client disclosure generation)

- View compliance as risk mitigation that protects long-term business sustainability

The Future of AI in UK Surveying Practice

The RICS Professional Standard on Responsible AI Use in UK Surveying: Guidelines for Building Surveyors and Valuers in 2026 represents the beginning rather than the end of AI governance evolution in the profession. Several trends will likely shape future developments:

Increasing AI Sophistication

As AI capabilities advance, the line between tool and decision-maker may blur further. Future standard revisions may need to address:

- Autonomous AI systems requiring minimal human input

- AI systems that explain their reasoning processes

- Integration of multiple AI systems creating complex technological ecosystems

- AI-generated expert witness reports and testimony

Enhanced Verification Tools

Ironically, AI may help verify AI—second-generation tools designed specifically to detect errors, bias, and hallucinations in first-generation AI outputs. This "AI auditing AI" approach could strengthen compliance frameworks.

Professional Education Evolution

RICS and other professional bodies will likely expand AI competency requirements in continuing professional development (CPD) programs, potentially introducing:

- AI literacy certification for surveyors

- Specialized qualifications in AI-assisted surveying methodologies

- Ethics modules addressing AI decision-making scenarios

- Technical training in AI system evaluation and selection

Insurance and Liability Frameworks

Professional indemnity insurance markets will adapt to AI-related risks, potentially developing:

- AI-specific coverage endorsements

- Premium adjustments based on AI governance maturity

- Exclusions for non-compliant AI use

- Specialized policies for firms developing proprietary AI systems

Conclusion

The RICS Professional Standard on Responsible AI Use in UK Surveying: Guidelines for Building Surveyors and Valuers in 2026 establishes a robust framework that enables building surveyors and valuers to harness AI's potential while maintaining the professional independence, accountability, and ethical standards that define chartered surveying practice. By mandating baseline knowledge, written documentation, professional verification, client transparency, and sustainability considerations, the standard ensures technology enhances rather than compromises service quality.

For practitioners navigating this new regulatory landscape, success requires viewing compliance not as administrative burden but as professional opportunity—a chance to differentiate through responsible innovation, strengthen client trust through transparency, and future-proof practices against technological disruption.

Actionable Next Steps for RICS Members

- Conduct an AI audit of your current practice—identify all AI systems in use and assess material impact

- Develop or update your responsible use policy informed by a comprehensive risk register specific to your surveying services

- Implement documentation systems for material impact determinations, verification protocols, and client disclosures

- Review client engagement templates to incorporate AI usage disclosures where applicable

- Invest in professional development to build AI literacy and verification competencies

- Consult with your professional indemnity insurer about AI-related coverage and requirements

- Establish regular review schedules for AI governance policies to keep pace with technological evolution

The integration of AI into surveying practice is inevitable; the standard ensures this integration occurs responsibly, ethically, and in service of the profession's fundamental commitment to protecting public interest through expert, independent property assessment. Whether conducting building surveys, preparing valuations, or providing expert witness services, RICS members can confidently embrace AI tools while maintaining the professional standards that distinguish chartered surveyors in the UK property market.

References

[1] Ai Responsible Use Standard – https://ww3.rics.org/uk/en/journals/construction-journal/ai-responsible-use-standard.html

[2] Responsible Use Of Artificial Intelligence In Surveying Practice September 2025 – https://www.rics.org/content/dam/ricsglobal/documents/standards/Responsible-use-of-artificial-intelligence-in-surveying-practice_September-2025.pdf

[3] Rics Introduces Global Standard For The Responsible Use Of Ai In Surveying – https://www.eddisons.com/news/rics-introduces-global-standard-for-the-responsible-use-of-ai-in-surveying

[4] Navigating The New Rics Ai Standard What It Means For Surveyors – https://www.artefact.com/blog/navigating-the-new-rics-ai-standard-what-it-means-for-surveyors/

[7] Rics Global Standard On Responsible Ai Use In Surveying Practice Now In Effect – https://www.lexisnexis.co.uk/legal/news/rics-global-standard-on-responsible-ai-use-in-surveying-practice-now-in-effect

[8] Rics Global Standard Responsible Use Of Ai In Surveying Practice – https://www.rlb.com/europe/insight/rics-global-standard-responsible-use-of-ai-in-surveying-practice/