Artificial intelligence systems now analyze building defects faster than any human surveyor—but who bears responsibility when the algorithm misses a critical structural flaw? Since March 9, 2026, every RICS member and regulated firm has operated under the profession's first-ever global standard for responsible AI use [1], fundamentally reshaping how chartered surveyors balance technological innovation with professional accountability. The RICS Professional Standard for Responsible AI in Surveying: Implementation Challenges and Ethical Frameworks for 2026 represents more than compliance paperwork—it's a comprehensive framework protecting clients while enabling surveyors to harness AI's transformative power in defect detection, valuation work, and risk assessment.

This mandatory standard arrives as AI tools proliferate across surveying practices, from drone-based building pathology assessments to automated valuation models. Yet adoption brings profound questions: How do firms determine when AI use has "material impact" on service delivery? What documentation proves adequate professional skepticism? How should surveyors communicate AI involvement to clients without undermining confidence? These implementation challenges demand practical solutions grounded in ethical frameworks that preserve the surveyor's irreplaceable professional judgment.

Key Takeaways

✅ Mandatory compliance since March 9, 2026: All RICS members and regulated firms must follow the standard when AI use materially impacts surveying services [1]

✅ Four governance pillars required: Firms must implement governance and risk management, professional judgment oversight, client transparency protocols, and responsible AI development practices [2]

✅ Material impact determination is critical: Surveyors must document in writing whether AI systems materially affect service delivery—triggering full standard requirements [4]

✅ Professional accountability remains unchanged: AI assists but never replaces the surveyor's responsibility for every piece of professional advice provided [2]

✅ Business model disruption anticipated: The standard signals potential transformation of traditional time-based billing structures as firms articulate value beyond AI outputs [2]

Understanding the RICS Professional Standard for Responsible AI in Surveying: Core Requirements for 2026

The RICS Professional Standard for Responsible AI in Surveying: Implementation Challenges and Ethical Frameworks for 2026 establishes unprecedented requirements for how chartered surveyors integrate artificial intelligence into professional practice. Unlike voluntary guidance, this standard carries mandatory force—non-compliance risks disciplinary action through the RICS Regulatory Tribunal [4].

The Material Impact Threshold 🎯

The standard's applicability hinges on a crucial determination: does AI use have material impact on service delivery? This threshold separates routine technology use from high-risk applications requiring full standard compliance.

RICS members and regulated firms must:

- Assess each AI system for material impact potential

- Document the determination in writing with clear reasoning

- Retain records demonstrating the assessment process

- Understand that the Regulatory Tribunal holds final authority on materiality disputes [4]

Material impact typically occurs when AI systems directly influence professional conclusions in Level 3 building surveys, valuation reports, or structural assessments. For example, using AI to detect dampness patterns in thermal imaging would likely trigger the standard, while AI-powered scheduling software would not.

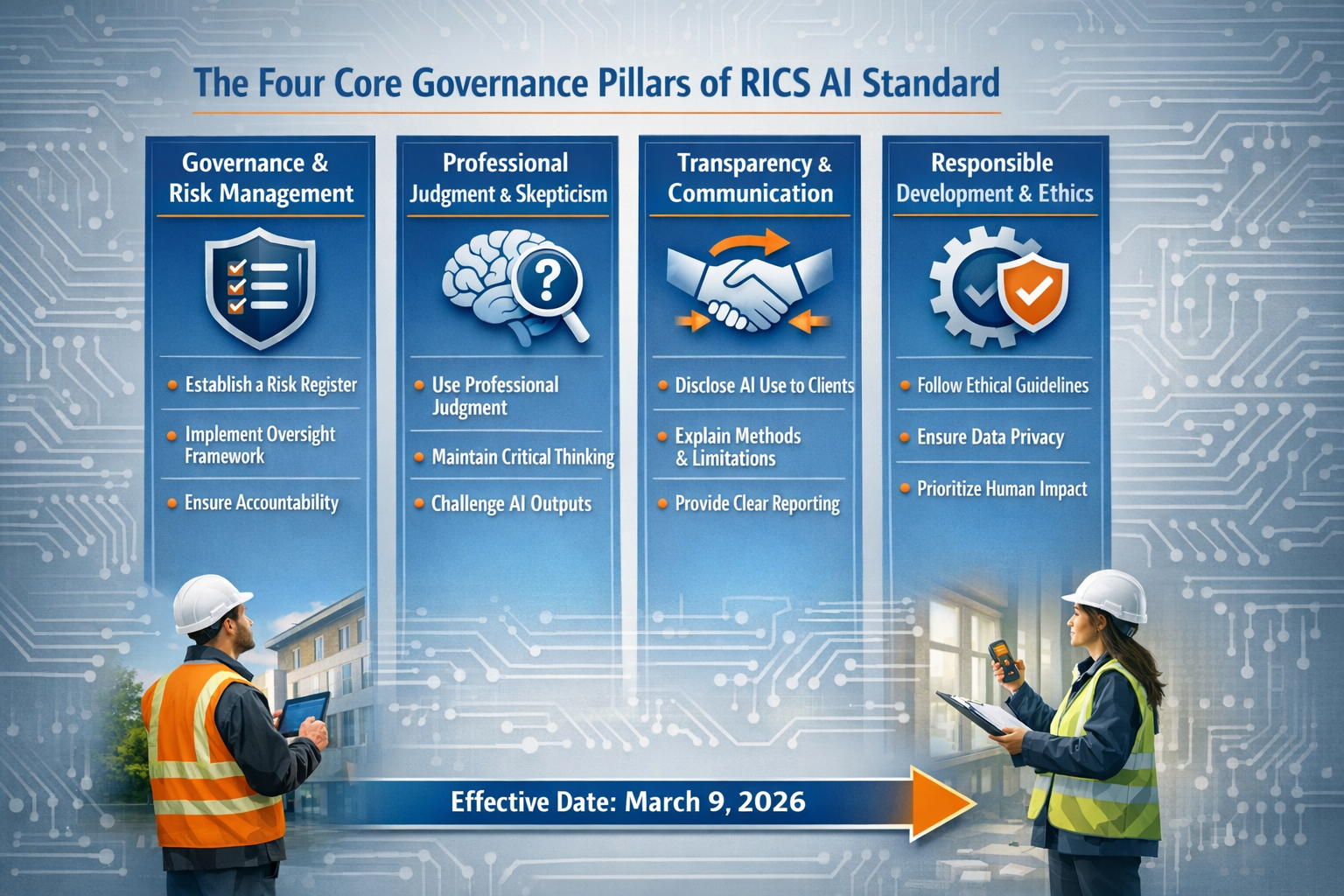

Four Core Governance Pillars

The standard organizes requirements across four interconnected domains [2]:

| Governance Pillar | Key Requirements | Implementation Priority |

|---|---|---|

| Governance & Risk Management | Risk registers, material impact assessments, system governance protocols | 🔴 Critical – Complete before AI deployment |

| Professional Judgment & Oversight | Documentation of skepticism, automation bias countermeasures, output validation | 🔴 Critical – Ongoing for every AI use |

| Transparency & Client Communication | Disclosure of AI involvement, clear explanations of limitations | 🟡 High – Required for client-facing work |

| Responsible AI Development | Procurement due diligence, vendor assessment, ethical sourcing | 🟡 High – Essential for new system adoption |

Mandatory Risk Registers

All firms using AI systems with material impact must create and maintain comprehensive risk registers documenting [5]:

- Inherent bias in AI training data and algorithms

- Erroneous outputs and false positive/negative rates

- Automation bias (over-reliance on AI recommendations)

- Data security vulnerabilities and privacy risks

- System limitations and failure modes

These registers aren't static documents—they require regular updates as firms gain operational experience with AI systems. When conducting areas of further investigation during property surveys, surveyors must reference risk register entries relevant to AI-assisted defect detection.

Responsible AI Use Policies

Before deploying any AI system with material impact, firms must develop written policies informed by their risk registers [1]. These policies should address:

- ✅ Acceptable use cases and prohibited applications

- ✅ Training requirements for staff using AI tools

- ✅ Quality assurance and validation procedures

- ✅ Client communication protocols

- ✅ Data governance and security measures

- ✅ Incident response procedures for AI errors

The policy becomes the operational blueprint guiding daily practice—not merely a compliance checkbox.

System Governance Assessment Prerequisites

The standard mandates that firms complete and document multiple system governance assessment steps before deploying AI systems [1]. This prerequisite approach prevents the "deploy first, assess later" mentality that creates client risk.

Assessment steps include:

- Vendor due diligence: Evaluating AI provider credentials, training data sources, and validation methodologies

- Bias testing: Examining whether the system produces systematically skewed results for specific property types or locations

- Accuracy benchmarking: Comparing AI outputs against human expert assessments

- Integration planning: Determining how AI fits within existing workflows

- Rollback procedures: Establishing protocols to discontinue AI use if problems emerge

For surveyors adopting drone survey technology with AI-powered image analysis, these assessments prove particularly critical given the novel nature of aerial defect detection.

Implementation Challenges: Navigating the RICS Professional Standard for Responsible AI in Surveying in Practice

While the RICS Professional Standard for Responsible AI in Surveying: Implementation Challenges and Ethical Frameworks for 2026 provides clear requirements, practical implementation presents significant obstacles for surveying firms of all sizes.

Determining Material Impact in Gray Areas

The material impact threshold sounds straightforward in theory but proves challenging in practice. Consider these scenarios:

Scenario 1: A surveyor uses AI to analyze thousands of comparable property sales when preparing a valuation report. The AI identifies patterns and suggests a valuation range, which the surveyor reviews and adjusts based on professional judgment. Material impact? Likely yes—the AI directly influences the core professional conclusion.

Scenario 2: A firm uses AI-powered transcription software to convert site visit voice notes into text for report drafting. Material impact? Likely no—the AI assists administrative tasks without influencing professional conclusions.

Scenario 3: AI analyzes thermal imaging during a building survey and flags potential dampness areas for the surveyor to investigate further. Material impact? Gray area—the AI influences investigation priorities but doesn't replace professional assessment.

The standard offers limited specific guidance on borderline cases, placing the burden on individual firms to make defensible determinations. Conservative approaches favor treating uncertain cases as material impact situations—ensuring compliance but potentially increasing administrative burden.

Resource Constraints for Smaller Practices 💼

Large surveying firms with dedicated compliance teams can more readily absorb the standard's requirements. Smaller practices face disproportionate challenges:

- Limited technical expertise to evaluate AI system architectures and training data

- Time constraints preventing thorough risk register maintenance

- Budget limitations restricting access to premium AI tools with better documentation

- Knowledge gaps about emerging AI capabilities and risks

A sole practitioner conducting RICS roof surveys faces the same compliance obligations as multinational firms—but without comparable resources. This reality may drive industry consolidation or create demand for third-party compliance services.

Balancing Transparency with Client Confidence

The standard requires transparency about AI involvement in service delivery [2], but surveyors worry that excessive disclosure might undermine client confidence. Key tensions include:

- Perception of reduced expertise: Will clients question whether they're paying for "real" professional judgment if AI plays a significant role?

- Competitive disadvantage: If competitors don't disclose AI use, does transparency harm business development?

- Explanation complexity: How can surveyors communicate AI limitations without overwhelming non-technical clients?

Effective approaches frame AI as enhancing—not replacing—professional expertise. When discussing building problems and solutions, surveyors might explain: "We use advanced AI analysis to ensure we don't overlook subtle defect patterns, but every conclusion reflects my professional judgment based on 15 years of experience."

Combating Automation Bias

The standard identifies automation bias—the tendency to over-rely on AI recommendations—as a key concern requiring countermeasures [5]. This psychological phenomenon affects even experienced professionals who intellectually understand AI limitations.

Practical countermeasures include:

- 🔍 Mandatory manual verification of AI-flagged issues before including in reports

- 🔍 Blind testing protocols where surveyors assess properties without seeing AI outputs first

- 🔍 Peer review systems specifically examining whether conclusions reflect independent professional judgment

- 🔍 Documentation requirements forcing explicit reasoning for accepting or rejecting AI recommendations

When conducting asbestos building surveys, surveyors must be particularly vigilant—automation bias could lead to missing hazardous materials if AI analysis provides false reassurance.

Vendor Lock-In and Procurement Challenges

The standard's procurement due diligence requirements [4] expose a uncomfortable reality: many AI vendors provide limited transparency about training data, algorithm design, or validation methodologies. Surveyors face difficult choices:

- Accept proprietary "black box" systems with limited insight into how conclusions are reached

- Restrict AI use to fully transparent open-source tools (which may lag in capabilities)

- Invest heavily in custom AI development (prohibitively expensive for most firms)

Additionally, rapid AI advancement means today's cutting-edge tool becomes tomorrow's legacy system. Firms must balance the benefits of early adoption against risks of investing in platforms that quickly become obsolete.

Documentation Burden and Workflow Disruption

The standard requires extensive written documentation [4]:

- Material impact determinations and reasoning

- Risk register entries and updates

- System governance assessments

- Professional skepticism evidence

- Client communication records

- Output validation procedures

This documentation serves legitimate purposes—protecting clients and demonstrating professional diligence. However, it also creates workflow friction that may slow service delivery and increase costs.

Successful firms integrate documentation into existing quality management systems rather than treating it as separate compliance activity. When preparing RICS homebuyer reports, surveyors can incorporate AI-related documentation into standard report templates and checklists.

Ethical Frameworks: Embedding Responsible AI Principles in the RICS Professional Standard for Surveying Practice

Beyond compliance mechanics, the RICS Professional Standard for Responsible AI in Surveying: Implementation Challenges and Ethical Frameworks for 2026 embeds deeper ethical principles that should guide surveying practice as AI capabilities expand.

Professional Accountability as the North Star

The standard's foundational ethical principle: AI assists professional practice but does not replace it [2]. The surveyor remains accountable for every piece of professional advice regardless of tools used.

This principle addresses a critical risk: the gradual erosion of professional responsibility through technology-enabled distancing. When AI systems perform complex analysis, surveyors might unconsciously view themselves as mere conduits for algorithmic outputs rather than independent professionals exercising judgment.

The standard reinforces that professional status derives from:

- Knowledge and expertise accumulated through training and experience

- Professional skepticism applied to all information sources (including AI)

- Independent judgment that critically evaluates rather than passively accepts

- Personal accountability for conclusions and recommendations

When answering questions during building surveys, surveyors must provide responses grounded in professional judgment—not deflect responsibility to "what the AI said."

Knowledge Requirements and Continuous Learning

The standard establishes baseline knowledge requirements for using AI in surveying [4], recognizing that responsible use demands understanding of both capabilities and limitations.

Essential knowledge domains include:

| Knowledge Area | Core Competencies | Application Examples |

|---|---|---|

| AI Fundamentals | Machine learning concepts, training data importance, algorithm types | Understanding why AI trained on urban properties may perform poorly on rural estates |

| Data Governance | Privacy regulations, data security, retention policies | Ensuring thermal imaging data complies with GDPR requirements |

| System Governance | Validation methodologies, bias testing, performance monitoring | Establishing protocols to verify AI defect detection accuracy |

| Output Reliability | Confidence intervals, error rates, limitation recognition | Knowing when AI valuations require additional human verification |

| Client Communication | Disclosure requirements, explanation techniques, expectation management | Clearly articulating AI role in building regulation compliance testing |

This knowledge requirement creates ongoing professional development obligations. As AI evolves, surveyors must continuously update their understanding—making AI literacy a career-long commitment rather than one-time training.

Bias Recognition and Mitigation

AI systems inherit biases from training data, algorithm design, and deployment contexts. The standard requires firms to document inherent bias in risk registers [5] and implement mitigation strategies.

Common bias sources in surveying AI include:

- Geographic bias: Systems trained predominantly on London properties may misjudge regional markets

- Property type bias: Algorithms optimized for standard residential properties may fail on unique or heritage buildings

- Temporal bias: Training data from pre-2020 may not reflect post-pandemic market dynamics

- Demographic bias: Valuation models may inadvertently incorporate discriminatory patterns from historical data

Effective bias mitigation requires:

- Diverse training data representing full range of properties and markets

- Regular bias audits testing system performance across different property categories

- Human oversight specifically examining AI conclusions for potentially biased patterns

- Transparency with clients when bias risks exist for specific property types

Client-Centric Transparency

The standard's transparency requirements [2] reflect an ethical commitment to informed consent—clients deserve to understand how their professional advisors reach conclusions.

Effective transparency balances completeness with accessibility:

Poor transparency: "This valuation used AI algorithms incorporating neural network analysis of comparable sales data with gradient boosting regression models."

Effective transparency: "We used advanced AI analysis to review thousands of comparable sales—far more than humanly possible—to identify relevant patterns. I then applied my professional judgment to adjust the AI's suggested range based on factors like the property's unique character and current market conditions."

The second approach explains AI's role without overwhelming technical detail while emphasizing preserved professional judgment.

Responsible Development and Procurement Ethics

The standard addresses responsible AI development [2], extending ethical obligations beyond use to creation and acquisition. Surveyors should consider:

- Training data ethics: Were training datasets obtained ethically with appropriate permissions?

- Labor practices: Were AI systems developed under fair working conditions?

- Environmental impact: What is the carbon footprint of computationally intensive AI models?

- Vendor integrity: Does the AI provider demonstrate commitment to responsible practices?

These considerations may seem tangential to surveying practice, but they reflect growing recognition that professional ethics encompass entire supply chains—not just direct client relationships.

The Business Model Disruption Question

Industry experts warn the standard signals potential structural disruption to traditional surveying business models [2]. The historical practice of billing by time (hourly rates or fixed fees based on estimated hours) faces challenges when AI dramatically reduces time requirements.

This disruption raises profound ethical questions:

- Value articulation: How do surveyors justify fees when AI performs analysis in minutes that previously required days?

- Pricing transparency: Should clients receive cost reductions reflecting AI efficiency gains?

- Competitive dynamics: Will price competition intensify as AI lowers cost structures?

- Professional sustainability: Can traditional practices survive if AI-enabled competitors offer dramatically lower prices?

The standard doesn't prescribe business model solutions but implicitly requires surveyors to articulate their added value beyond AI outputs. This value might include:

- 🏆 Professional judgment that contextualizes AI analysis

- 🏆 Accountability and professional indemnity insurance

- 🏆 Ability to explain and defend conclusions under scrutiny

- 🏆 Holistic advice integrating multiple considerations beyond algorithmic optimization

- 🏆 Relationship continuity and trusted advisor status

Surveyors who successfully navigate this transition will frame AI as amplifying their expertise rather than replacing it—positioning themselves as enhanced professionals rather than diminished intermediaries.

Practical Implementation Roadmap for 2026 and Beyond

Surveying firms seeking to implement the RICS Professional Standard for Responsible AI in Surveying: Implementation Challenges and Ethical Frameworks for 2026 effectively should follow a structured approach:

Phase 1: Assessment and Planning (Months 1-2)

Step 1: AI Use Inventory 📋

- Document all current and planned AI systems

- Identify which systems potentially have material impact

- Catalog AI vendors and technology platforms

Step 2: Material Impact Determinations

- Assess each AI system against material impact criteria

- Document determinations in writing with clear reasoning

- Establish review process for new AI tools

Step 3: Gap Analysis

- Compare current practices against standard requirements

- Identify documentation gaps, policy needs, and training requirements

- Prioritize compliance actions by risk level

Phase 2: Foundation Building (Months 3-4)

Step 4: Risk Register Creation

- Develop comprehensive risk register template

- Document inherent bias, erroneous output risks, and automation bias concerns

- Establish risk register maintenance schedule and ownership

Step 5: Policy Development

- Draft responsible AI use policy informed by risk register

- Define acceptable use cases and prohibited applications

- Establish quality assurance and validation procedures

- Create client communication templates

Step 6: System Governance Protocols

- Design system governance assessment checklist

- Establish vendor due diligence procedures

- Create documentation templates for governance assessments

Phase 3: Operational Integration (Months 5-6)

Step 7: Training and Education

- Deliver AI literacy training covering fundamentals and limitations

- Train staff on documentation requirements and procedures

- Conduct scenario-based exercises on professional skepticism

Step 8: Workflow Integration

- Embed compliance documentation into existing quality management systems

- Update report templates to accommodate AI-related disclosures

- Establish peer review processes examining AI use appropriately

Step 9: Client Communication Preparation

- Develop client-friendly explanations of AI involvement

- Create FAQ documents addressing common concerns

- Train client-facing staff on transparency requirements

Phase 4: Monitoring and Improvement (Ongoing)

Step 10: Performance Monitoring

- Track AI system accuracy and error rates

- Document incidents where AI outputs required significant correction

- Monitor automation bias indicators

Step 11: Continuous Improvement

- Regularly update risk registers based on operational experience

- Refine policies and procedures as AI capabilities evolve

- Stay current with RICS guidance updates and industry best practices

Step 12: Compliance Verification

- Conduct internal audits of AI use documentation

- Review material impact determinations periodically

- Ensure all staff maintain required knowledge competencies

Conclusion

The RICS Professional Standard for Responsible AI in Surveying: Implementation Challenges and Ethical Frameworks for 2026 represents a watershed moment for the surveying profession. By establishing the first global mandatory standard for responsible AI use, RICS has positioned chartered surveyors to harness artificial intelligence's transformative potential while preserving the professional judgment, accountability, and client protection that define professional practice [1][2].

Implementation challenges are substantial—from determining material impact in gray areas to combating automation bias and managing documentation burdens. Yet these challenges pale compared to the risks of unregulated AI adoption: eroded professional standards, client harm from algorithmic errors, and gradual displacement of human judgment by uncritical technology dependence.

The ethical frameworks embedded throughout the standard provide enduring guidance as AI capabilities evolve: professional accountability remains paramount, transparency serves client interests, bias requires active mitigation, and responsible development extends ethical obligations across supply chains. These principles will guide surveyors through technological changes we cannot yet anticipate.

For surveying firms, the path forward requires structured implementation combining compliance mechanics with genuine commitment to responsible practice. The standard isn't merely regulatory burden—it's a competitive advantage for firms that embrace it authentically. Clients increasingly value transparency, accountability, and ethical technology use. Surveyors who articulate their enhanced value in the AI era will thrive while those who view AI as mere cost reduction face existential business model challenges.

Actionable Next Steps

For RICS Members and Regulated Firms:

- Complete your AI use inventory this month—document all systems and make initial material impact determinations

- Establish your risk register immediately if using AI with material impact—this is prerequisite to compliant practice

- Develop written policies before deploying new AI systems—prevention beats remediation

- Invest in continuous learning about AI capabilities and limitations—knowledge requirements evolve constantly

- Engage clients transparently about AI involvement—build trust through openness rather than obscurity

For the Broader Surveying Profession:

The standard's success depends on collective commitment to responsible practice. Share implementation experiences, develop industry-wide best practices, and hold vendors accountable for transparency and ethical development. The surveying profession has an opportunity to lead other sectors in demonstrating how AI can enhance rather than diminish professional expertise.

The future of surveying isn't human versus machine—it's enhanced professionals leveraging AI while preserving the judgment, skepticism, and accountability that technology cannot replicate. The RICS Professional Standard for Responsible AI in Surveying: Implementation Challenges and Ethical Frameworks for 2026 provides the roadmap. Now comes the essential work of implementation.

References

[1] Ai Responsible Use Standard – https://ww3.rics.org/uk/en/journals/construction-journal/ai-responsible-use-standard.html

[2] Rics First Ever Standard On Responsible Ai Use Now In Effect – https://www.rics.org/news-insights/rics-first-ever-standard-on-responsible-ai-use-now-in-effect

[3] Watch – https://www.youtube.com/watch?v=pKk36SJ4Y_g

[4] Responsible Use Of Ai – https://www.rics.org/profession-standards/rics-standards-and-guidance/conduct-competence/responsible-use-of-ai

[5] Responsible Use Of Artificial Intelligence In Surveying Practice September 2025 – https://www.rics.org/content/dam/ricsglobal/documents/standards/Responsible-use-of-artificial-intelligence-in-surveying-practice_September-2025.pdf